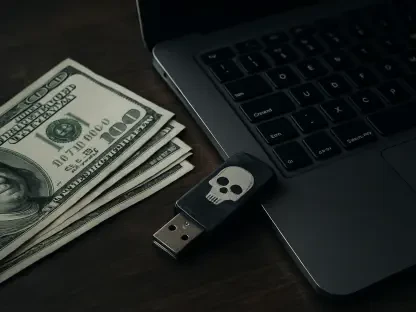

The rapid proliferation of artificial intelligence has created a landscape where the speed of deployment frequently outpaces the fundamental requirements of digital safety. While most public concern centers on the possibility of a chatbot providing a restricted recipe or a biased answer, the actual structural integrity of the systems hosting these models remains alarmingly fragile. Current research into the AI technological stack reveals that the industry has largely adopted a “security-second” mentality, prioritizing functional “vibes” and rapid market entry over the rigorous engineering needed to protect sensitive corporate data. This transition toward AI-driven operations has inadvertently opened a massive attack surface that spans from the physical silicon in data centers to the high-level application interfaces used by millions of employees.

The Evolution of AI Security Architecture

The emergence of AI security as a distinct discipline marks a critical shift in how the tech industry perceives risk. In the early stages of the current boom, security was often treated as an afterthought, relegated to simple filters designed to prevent offensive outputs. However, as organizations began integrating large language models into their core business logic, the definition of the perimeter changed. We are no longer just protecting a database; we are protecting a dynamic system that can execute code, access internal APIs, and process vast amounts of proprietary information in real-time.

This evolution has been shaped by the realization that AI is not just another software layer but a new type of infrastructure entirely. Traditional cybersecurity tools often struggle to interpret the non-deterministic nature of model outputs, leading to a gap in visibility. As a result, the industry is now moving toward an integrated architecture that attempts to wrap the entire AI lifecycle in a protective shell. This includes everything from the provenance of training data to the final inference stage where a user receives a response. The goal is to move beyond “patching” and toward a system where security is baked into the very mathematical weights of the models themselves.

The Five-Layer AI Threat Model

Model Training and Data Sourcing Integrity

The foundation of any AI system is the data used to train it, yet this first layer is often the most exposed. The primary risk at this stage is the accidental leakage of massive datasets, which can occur when researchers use overly permissive file-sharing configurations. For instance, high-profile incidents have shown that even major tech corporations can inadvertently expose tens of terabytes of training data through a single misconfigured link. This is not just a privacy issue; it is a corporate existential threat, as these datasets often contain the “secret sauce” of a company’s intellectual property.

Beyond simple leakage, there is the growing problem of data poisoning. If an attacker can influence the information fed into a model during its training phase, they can create “backdoors” that remain dormant until triggered by a specific phrase. This makes the integrity of data sourcing a vital component of the security stack. Unlike traditional software, where a bug can be traced to a line of code, a poisoned model carries its vulnerability in a way that is nearly impossible to detect through standard auditing. This necessitates a more rigorous approach to data lineage and access control from the very first day of development.

AI Cloud Hosting and Core Hardware Systems

The second and third layers of the threat model involve the physical and virtual environments where models reside. Most modern AI is hosted in specialized cloud environments that rely on specific hardware, such as NVIDIA’s Triton Inference Server or specialized GPU clusters. A vulnerability at this level is particularly devastating because it represents a single point of failure. If an attacker compromises the underlying server software, they potentially gain unauthenticated access to every model running on that hardware, regardless of which individual customer owns it.

This systemic risk is compounded by the universal use of certain software libraries across the industry. When a flaw is discovered in a core library used by all major cloud providers, the entire AI ecosystem becomes vulnerable simultaneously. Furthermore, the hardware-level optimizations required to run these models at scale often bypass traditional security sandboxes to maximize performance. This trade-off between speed and isolation is a recurring theme in AI infrastructure, where the demand for low-latency responses often leads to the erosion of standard security boundaries.

Inference Stages and Application Layers

The final layers of the threat model concern the point of interaction: the inference stage and the applications built on top of it. A significant and concerning trend in this space is “vibe coding,” a style of rapid development where developers use AI to generate entire applications based on a general “feeling” or functional goal rather than strict coding standards. While this accelerates innovation, it often results in software that lacks basic input validation or secure API handling. Investigations have shown that many vibe-coded applications can be fully compromised within minutes because they lack the structural rigors of professional software engineering.

Inference services themselves, such as those used to deploy models like DeepSeek or Ollama, often contain porous software environments. Even when the model itself is “safe,” the wrapper around it may allow for unauthorized code execution. While “prompt injection”—the act of tricking a chatbot into breaking its rules—gets the most media attention, it is often the least dangerous threat compared to these deeper infrastructure flaws. The real danger lies in the ability of an attacker to move from a simple chat interface to the underlying server, gaining a foothold in the corporate network.

Current Trends in AI Vulnerability Management

The industry is currently seeing a shift away from reactive security toward more proactive, automated management. One of the most prominent trends is the move away from insecure legacy file formats. For years, the industry relied on the “Pickle” format to store model weights, despite the fact that it allows for the execution of arbitrary code upon being opened. We are finally seeing a transition toward safer alternatives that separate data from executable instructions, effectively neutralizing one of the oldest “backdoors” in the data science world.

Moreover, there is a growing trend of “Shadow AI” within large enterprises. Just as employees once used unauthorized cloud storage to bypass IT restrictions, they are now using unsanctioned AI tools to boost productivity. This has forced security teams to adopt continuous monitoring solutions that can detect and audit AI usage across an entire organization in real-time. These tools do not just block access; they provide visibility into what data is being sent to external models, helping to prevent the “slow-motion” data breach that occurs when sensitive information is used to train third-party systems.

Real-World Applications and Case Studies

In the financial sector, AI infrastructure security is being put to the test through the deployment of automated trading and fraud detection systems. These industries cannot afford even a millisecond of compromise, leading to the adoption of “confidential computing.” This technology allows AI models to process encrypted data without ever “seeing” it in an unencrypted state, even in memory. By isolating the computation in a hardware-based enclave, banks can utilize powerful cloud-based AI while maintaining the highest level of data sovereignty.

In contrast, the healthcare industry is focusing on secure federated learning. This allows multiple hospitals to collaborate on training a single AI model for disease detection without ever sharing the underlying patient data. Each institution trains the model locally and only shares the mathematical “updates” with a central server. This implementation demonstrates how security-first architecture can actually enable innovation that would otherwise be impossible due to regulatory and privacy constraints. These use cases show that while the risks are high, the technical solutions are beginning to mature.

Challenges in Securing the AI Lifecycle

Despite these advancements, several significant hurdles remain. One of the primary technical challenges is the inherent “black box” nature of deep learning. It is difficult to provide a 100% guarantee that a model will not behave maliciously under a specific, rare set of inputs. This lack of predictability makes it hard to fit AI into traditional compliance frameworks that require deterministic outcomes. Furthermore, the regulatory landscape is still in flux, with different regions proposing vastly different standards for AI safety, creating a complex web of requirements for global companies.

Another major obstacle is the talent gap. There is a severe shortage of professionals who understand both high-level machine learning and low-level cybersecurity. Most data scientists are not trained in threat modeling, and most security analysts do not understand the intricacies of neural network architectures. This disconnect often leads to “security by obscurity,” where teams assume their systems are safe simply because they are complex. As the tools for attacking AI become more automated and accessible, this knowledge gap will become an increasingly dangerous liability for organizations that do not invest in cross-disciplinary training.

The Future of Resilient AI Infrastructure

The trajectory of the industry suggests a move toward “self-healing” AI infrastructure. We are approaching a point where security agents, powered by AI themselves, will constantly probe the infrastructure for vulnerabilities, patching code and updating access policies in real-time. This “AI vs. AI” dynamic will define the next phase of cybersecurity, where the window of opportunity for an attacker to exploit a flaw will shrink from days to mere seconds. Breakthroughs in formal verification may also allow developers to mathematically prove that a model cannot be subverted, providing a level of certainty that currently does not exist.

Furthermore, we can expect a total decoupling of data and execution in future AI architectures. This will likely involve decentralized hosting models where no single entity has access to the entire model or the data it processes. By distributing the infrastructure, we can eliminate the “single point of failure” risk that currently plagues cloud-based AI. As these technologies mature, the goal will shift from merely “securing” AI to building systems that are inherently resilient to failure, ensuring that the transformative potential of artificial intelligence is not derailed by avoidable security lapses.

Summary of Findings and Industry Outlook

The investigation into AI infrastructure security highlighted a critical disconnect between the rapid adoption of these technologies and the underlying safety protocols required to support them. Organizations have historically prioritized speed to market, leading to a “ship now, patch later” philosophy that has left almost every layer of the AI stack—from data sourcing to the application layer—vulnerable to exploitation. The research demonstrated that legacy formats and rapid development trends like “vibe coding” have created significant backdoors, making the current infrastructure a high-value target for sophisticated attackers.

To mitigate these risks, the industry moved toward a more proactive stance, emphasizing infrastructure hardening and continuous, automated monitoring. The verdict on the current state of the technology was that while the risks are systemic and severe, the emergence of more secure data formats and confidential computing offered a viable path forward. The focus for the coming years shifted toward closing the loop on security by integrating it directly into the development lifecycle. Ultimately, the long-term success of artificial intelligence depended on a fundamental shift in mindset, where the integrity of the infrastructure was treated as a prerequisite for innovation rather than a secondary concern.