Rupert Marais is a leading security specialist with a deep focus on the intersection of endpoint protection, network management, and emerging software threats. With extensive experience in hardening developer environments, he has become a key voice in navigating the shifting landscape of supply chain security. As organizations increasingly rely on open-source ecosystems, Rupert’s insights help bridge the gap between rapid software delivery and the robust security protocols needed to defend against sophisticated state-sponsored and financially motivated actors.

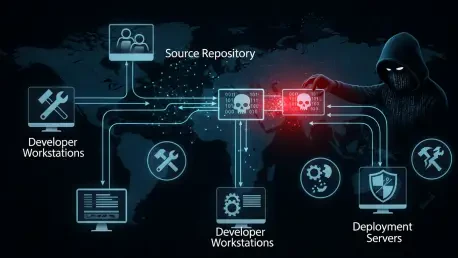

The following discussion explores the evolving tactics of modern threat groups, the specific vulnerabilities found within CI/CD pipelines, and the strategic shifts necessary to secure the future of software development.

Attackers recently compromised vulnerability scanners to harvest CI/CD secrets and cloud credentials across thousands of organizations. How does this shift the traditional security paradigm for developers, and what specific technical hurdles make these “security-on-security” attacks particularly difficult to detect within high-speed development pipelines?

This shift is particularly alarming because it turns our primary defensive tools into offensive weapons. When a group like TeamPCP compromises a tool like Trivy, they aren’t just hitting one target; they are gaining a foothold in the automated pipelines of over 100,000 users and contributors. The technical hurdle here is that security tools often require high-level permissions to scan containers or Kubernetes configurations, meaning if the tool is poisoned, it already has the “keys to the kingdom” to exfiltrate SSH keys and cloud credentials. In high-speed environments, these tools run automatically and frequently, creating a massive volume of activity where malicious data exfiltration can easily blend in with legitimate traffic. We saw this play out across north of 10,000 organizations, proving that the traditional trust we place in our security stack needs a serious “zero-trust” rethink.

Using AI-enabled social engineering to build realistic workspaces and digital clones of company founders represents a sophisticated threat to open-source maintainers. What subtle indicators should developers look for during these collaborations, and what operational changes can prevent a single hijacked account from poisoning packages used by millions?

The Axios incident showed us that attackers are no longer just sending suspicious emails; they are building entire digital ecosystems, including fake Slack workspaces and cloned personas of company founders, to lure maintainers. Developers should be wary of any collaboration that suddenly shifts to a different platform or requires a “mandatory” software update—like the fake Teams update that delivered a Remote Access Trojan (RAT) to the Axios maintainer. To prevent a single hijacked account from causing a catastrophe, we must move away from sole-maintainer dependencies for critical packages that see 100 million weekly downloads. Implementing multi-party signing for releases and enforcing strict branch protection rules are no longer optional if we want to protect the downstream users who rely on these libraries.

While implementing a Software Bill of Materials (SBOM) provides an ingredients list for code, management at scale remains a challenge for many firms. How can organizations effectively use AI agents to automate the identification of compromised packages, and what metrics determine if an SBOM strategy is actually reducing exposure time?

The sheer volume of components in modern apps makes manual SBOM management impossible, but AI agents can bridge that gap by continuously cross-referencing your “ingredients list” against real-time threat intelligence. For instance, when a package like Axios is backdoored, an AI agent can instantly scan your entire environment to identify exactly where that version is running, rather than waiting for a manual audit. The key metric for success here is “Mean Time to Inventory,” which measures how quickly you can locate a newly disclosed vulnerability across your infrastructure. If you can’t identify your exposure within minutes of a compromise being announced, your SBOM strategy isn’t providing the agility required to counter modern “smash-and-grab” attacks.

Enforcing a mandatory 24-hour delay on downloading new software package versions can bypass many supply-chain infections that are discovered shortly after release. What are the practical trade-offs of this policy for fast-moving teams, and how can organizations enforce this without stifling the agility required for modern deployment?

The primary trade-off is a slight delay in accessing the latest features or non-critical bug fixes, but given that many supply chain attacks are detected within 12 hours, this 24-hour buffer is a powerful defensive move. We saw that the malicious Axios releases were live for only about three hours; a team with a 24-hour delay policy would have remained completely untouched. To enforce this without slowing down developers, organizations can use “curated” internal repositories or proxy servers that automatically hold new versions in a sandbox for a day. It’s about creating a “safety gap” that allows the broader security community to find and report poison before it reaches your production environment.

As threat actors adopt voice and video cloning to impersonate executives or colleagues in meetings, traditional digital authentication is becoming insufficient. Beyond multi-factor authentication, what role do “secure phrases” or physical verification objects play in everyday operations, and how do you successfully train a remote workforce to utilize them?

We are entering an era where we can no longer trust our eyes or ears in a digital meeting, making physical verification a necessity. “Secure objects” involve having a unique physical item on your desk—like a specific branded mug or a challenge-response card—that you can hold up to the camera to prove you are really you. Training a remote workforce involves normalizing these “weird” security checks so they don’t feel awkward during a high-pressure call with a CEO. You have to build a culture where asking for a secure phrase or a physical verification is seen as a sign of professional competence rather than a lack of trust, especially since North Korean groups are already using AI to create incredibly believable social engineering lures.

Vulnerability scanners, static analysis tools, and Python packages are increasingly being targeted to gain initial access to larger clusters. In what ways should security teams rethink their lateral movement protections, and what specific steps can be taken to isolate developer environments from broader corporate infrastructure?

The recent attacks on KICS and LiteLLM demonstrate that attackers are using security tools as a springboard to move laterally into cloud environments and Kubernetes clusters. Security teams must treat developer environments as “high-risk zones” and implement strict network segmentation to ensure that a compromised CI/CD pipeline doesn’t have a direct path to the broader corporate network. Specific steps include using ephemeral build nodes that are destroyed after every job and strictly limiting the scope of secrets; a scanner should never have permissions that allow it to modify production databases. We need to stop assuming that internal tools are inherently safe just because they are behind a firewall.

Some groups use a “smash-and-grab” style to quickly exfiltrate credentials and move on, while others remain silent for long-term access. How should incident response plans differ when dealing with high-velocity extortionists compared to state-sponsored actors, and what are the first three things an organization should do upon discovery?

High-velocity groups like TeamPCP prioritize speed over stealth, often getting in and out within hours, which requires an incident response plan focused on immediate containment and credential revocation. In contrast, state-sponsored actors like UNC1069 are often playing a longer game, necessitating a deeper forensic investigation to ensure no hidden backdoors remain. Upon discovery, the first three steps are: immediately rotate all secrets and CI/CD credentials, isolate the affected build environments, and audit your SBOM to see where the poisoned package might have moved laterally. You have to act as if every secret touched by the compromised tool is already in the hands of the adversary.

What is your forecast for open-source supply chain security?

I believe we are heading toward a period of “hyper-personalized” attacks where AI will allow threat actors to scale their social engineering to an unprecedented degree. We will see more “vibe researchers”—attackers who deeply understand developer culture and use that knowledge to slip malicious code into the most trusted parts of our infrastructure. However, this will also force a mandatory adoption of SBOMs and automated AI-driven auditing, eventually leading to a more resilient ecosystem where security is baked into the code’s DNA rather than being an afterthought. The window of opportunity for attackers is shrinking, but the sophistication of their entry methods is only going to grow.