The rapid evolution of web server management has reached a critical juncture where the elegance of a user interface often masks severe structural vulnerabilities. NGINX-UI emerged as a sophisticated answer to the friction inherent in command-line configuration, providing a bridge for DevOps professionals who require both speed and granular control. By abstracting the complexities of NGINX into a centralized, web-based dashboard, this technology represents a pivot toward democratized server administration. However, the adoption of such tools necessitates a rigorous examination of their underlying security architecture, particularly as they integrate advanced automation protocols. In the modern landscape, the shift from manual scripts to automated management platforms is no longer a luxury but a standard for maintaining high-uptime environments across various global sectors.

This technology operates on the principle of accessibility, translating the intricate syntax of NGINX configuration files into visual components. It serves as a comprehensive wrapper, managing not only the core server settings but also the accompanying ecosystem of certificates and log files. This evolution reflects a broader trend in infrastructure as code, where the goal is to reduce human error through visualization. While competitors often focus on rigid, enterprise-locked solutions, NGINX-UI maintains an open-source spirit that appeals to a wide demographic, from solo developers to large-scale production teams. The emergence of this tool coincides with a demand for real-time observability, allowing administrators to monitor traffic patterns and health metrics without leaving the browser environment.

Overview of NGINX-UI and the Management Landscape

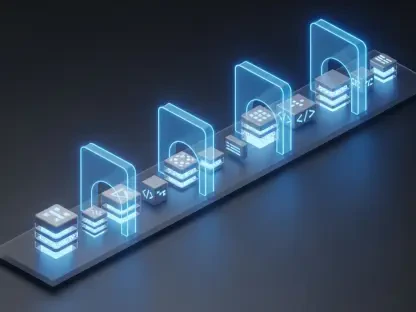

The core principles of NGINX-UI are rooted in the simplification of the reverse proxy and load balancing lifecycle. By providing a graphical layer over the NGINX binary, it allows for the rapid deployment of server blocks and upstream configurations that would traditionally require deep expertise in configuration syntax. The architecture consists of a lightweight backend that interacts directly with the server’s file system, ensuring that changes made through the web interface are reflected instantly in the configuration files. This context is vital because it moves the point of failure from the manual entry of a terminal to the security of the web interface itself, creating a centralized point of management for entire server clusters.

As the industry transitioned away from manual command-line configuration, the relevance of centralized management platforms became undeniable. This shift was driven by the need for consistency across staging and production environments, where a single typo in a configuration file could lead to widespread downtime. NGINX-UI fills this gap by providing a user-friendly platform that enforces structure while retaining the performance characteristics of the underlying engine. This evolution has made the technology a staple in the DevOps toolkit, although it also concentrated administrative power into a single, potentially vulnerable web application.

Architectural Components and Integration Features

Model Context Protocol (MCP) Implementation

One of the most forward-thinking elements of the current architecture is the implementation of the Model Context Protocol. This integration is designed specifically to make server management AI-ready, enabling autonomous agents to suggest or execute configuration changes based on natural language inputs or observed traffic anomalies. By providing a structured way for machine intelligence to interact with the underlying system, the protocol promises a future of self-healing infrastructure. Unlike traditional APIs that require rigid endpoint calls, this implementation fosters a more conversational and context-aware interaction between the administrator and the server.

This technical flexibility allows for rapid prototyping of complex routing rules that would otherwise take hours of manual scripting to perfect. The protocol enables external tools to query the current state of the server and propose optimizations, which the administrator can then approve or reject through the interface. However, this implementation also introduced a new attack surface, as it opened up internal configuration endpoints to external calls. The uniqueness of this feature lies in its ability to bridge the gap between human decision-making and machine-led automation, but it demands a level of security that matches its high level of administrative access.

Administrative Dashboard and Configuration Management

The administrative dashboard functions as the command center, where the orchestration of server blocks and reverse proxy rules occurs with minimal latency. For DevOps professionals, the ability to manage these elements through a unified interface translates to significant time savings and a reduction in the cognitive load associated with multi-server deployments. Technical metrics suggest that the interface handles high volumes of configuration updates smoothly, maintaining synchronization across distributed nodes. The performance characteristics are tuned to ensure that even complex configurations involving hundreds of server blocks do not degrade the responsiveness of the management tool.

Real-world usage often highlights the platform’s ability to simplify SSL and TLS certificate deployments, which historically remained one of the most tedious aspects of web server maintenance. By centralizing these tasks, the tool ensures that security certificates are kept up to date across the entire fleet of managed servers. The integration of automated renewal services further enhances this capability, making it a robust solution for production-grade environments. Despite these benefits, the concentration of such critical management tasks in a single dashboard creates a high-value target for malicious actors, requiring constant vigilance regarding the security of the dashboard’s own access controls.

Recent Developments in Protocol Integration and Security

The trajectory of protocol integration has been heavily influenced by the push for hyper-automation and AI-ready applications. As organizations move toward more dynamic environments, the expectation is that infrastructure should respond to external triggers without direct human intervention. This trend led to the adoption of protocols that favor external accessibility, such as the Model Context Protocol, which bridges the gap between static configuration files and dynamic agents. The industry is witnessing a transition where the web server is no longer a passive entity but an active participant in a larger, automated ecosystem.

This shift mandates a rethink of how administrative tools are built, ensuring they can withstand the scrutiny of automated scanning while providing the openness required for collaboration. Emerging trends in automated infrastructure management have prioritized the ease of integration, sometimes at the expense of traditional security perimeters. The push for these advanced protocols has forced developers to balance the need for innovative features with the necessity of protecting the server from unauthorized configuration changes. This tension is at the heart of the latest security reviews, where the discovery of vulnerabilities has prompted a more cautious approach to protocol implementation.

Real-World Applications and Deployment Scenarios

Deployment scenarios for NGINX-UI span a diverse range of industries, from fintech firms requiring strict load balancing to media companies handling massive surges in streaming traffic. In large-scale production environments, the platform is often utilized to manage reverse proxies that protect sensitive backend services from direct exposure. The tool’s ability to streamline the deployment of Let’s Encrypt certificates has made it a favorite for organizations that prioritize encrypted communication across all endpoints. Furthermore, the interface is frequently used in development cycles to quickly spin up staging environments that mirror production settings, ensuring that configuration bugs are caught long before code reaches the end user.

Unique use cases often involve the management of complex traffic shaping rules and the deployment of web application firewalls through a centralized interface. By simplifying the management of these security layers, NGINX-UI helps small teams maintain a security posture that would otherwise require a dedicated infrastructure department. The platform’s ability to provide a visual representation of traffic flow and upstream health makes it an invaluable asset for troubleshooting during outages. This versatility proves that the technology is more than a simple GUI; it is a foundational piece of modern deployment pipelines that addresses the needs of high-performance web servers.

Technical Challenges and Critical Vulnerabilities

Despite its functional prowess, the technology faces formidable hurdles in the form of architectural security flaws. A critical bypass was identified in the communication channel responsible for handling MCP messages, where the system failed to verify the authenticity of requests at specific endpoints. This flaw, coupled with the reliance on a static node secret for identification, creates a scenario where an attacker can gain administrative privileges without valid credentials. The technical hurdle lies in the fact that the authentication mechanism was not uniformly applied across all service endpoints, leaving a gaping hole in what was supposed to be a secure management interface.

The risk is compounded by the interaction between multiple flaws, such as the synergy between authentication bypasses and backup exposure vulnerabilities. When sensitive application data, including static secrets, is exposed through poorly protected backup files, the security of the entire system collapses. Ongoing development efforts have focused on mitigating these limitations by transitioning to more robust authentication frameworks that do not rely on static keys. The release of security patches, such as version 2.3.4, represents a significant step toward addressing these vulnerabilities, but the incident highlights the need for a security-first approach when designing management tools that have direct access to server kernels.

Future Outlook for AI-Driven Infrastructure Management

The path forward for AI-driven infrastructure management requires a fundamental shift toward per-action authentication. Future developments must move away from static, shared secrets toward dynamic, short-lived tokens that validate every single command issued by an AI agent or a human administrator. Breakthroughs in secure protocol design will likely focus on zero-trust principles, where no entity is granted persistent access to the server configuration regardless of their initial authentication status. This will ensure that even if an initial session is compromised, the attacker cannot perform high-impact actions without further verification.

The long-term impact of these improvements will be measured by the resilience of the global web against automated exploitation campaigns. As management tools become more sophisticated, the focus will shift from simple configuration management to intelligent, self-securing systems that can detect and block malicious configuration attempts in real-time. Maintaining the integrity of the global web will depend on the ability of these tools to evolve as quickly as the threats they face. The integration of AI will eventually serve as a defensive layer, where machine learning models analyze configuration changes for security risks before they are applied to production servers.

Assessment and Final Summary

The review of this technology revealed a complex balance between administrative efficiency and the inherent risks of adopting nascent protocols. While the introduction of AI-integrated tools offered a glimpse into a more automated future, the practical implementation exposed significant gaps in the security posture of the platform. The project maintainers took necessary steps to mitigate these risks by releasing urgent security patches, yet the incident served as a stark reminder of the vulnerabilities that can linger in modern software. The overall state of the technology demonstrated that while it remained a powerful asset for DevOps teams, its reliability depended heavily on the rigor of its security architecture.

Ultimately, the impact on web infrastructure was profound, forcing a re-evaluation of how administrative interfaces should be hardened in an increasingly connected world. The transition from manual to centralized management brought undeniable benefits in terms of speed and consistency, but it also created new classes of vulnerabilities that required a different approach to defense. The technology’s current state suggested a maturing platform that was learning from its architectural missteps, pointing toward a future where security is not just an add-on but a fundamental component of the user experience. Actionable steps for the future must include the mandatory adoption of multi-factor authentication and the continuous auditing of all protocol integrations to ensure that convenience never again overrides the safety of the server.