The rapid transition from traditional face-to-face counseling to digital platforms has occurred with breathtaking speed, yet the invisible infrastructure supporting these services often remains dangerously fragile. While millions of individuals have turned to Android applications to manage anxiety, depression, and daily stressors, a recent and comprehensive security audit of ten prominent mental health apps has exposed a troubling reality regarding the safety of user information. These platforms, which collectively represent over 14 million downloads on the Google Play Store, serve as repositories for some of the most sensitive data imaginable, including therapy session transcripts, medication schedules, and emotional logs. However, the investigation conducted by security firm Oversecured identified a staggering 1,575 vulnerabilities across these apps, ranging from low-severity configuration errors to high-severity flaws that could facilitate immediate data breaches. This discrepancy between the convenience of digital therapy and the actual technical safeguards in place raises urgent questions about the ethics of mobile health development.

The High Cost of Digital Intimacy

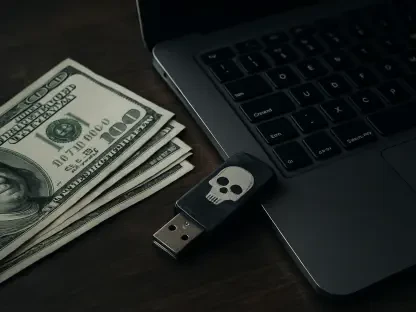

The Economic Value: Why Health Data Is Targeted

In the underground economy of the dark web, mental health records have emerged as a premium commodity, often fetching significantly higher prices than stolen credit card numbers or standard personal identifiers. While a compromised financial account can be quickly closed or a physical card replaced, the deeply personal history of a person’s mental health is a permanent record that cannot be “reset” once it is exposed to the public or malicious actors. Cybercriminals have recognized this permanence, with individual therapy records reportedly fetching $1,000 or more per entry because of the potential for long-term extortion or targeted social engineering. This high valuation creates a lucrative and persistent incentive for bad actors to exploit the specific vulnerabilities found in apps that store mood logs and clinical tools. The market shift toward medical data theft reflects a calculated move by hackers to target assets that retain their value indefinitely, making the protection of this data a matter of lifelong privacy rather than just temporary financial security.

The Irreversibility: Personal History as a Permanent Asset

The sensitive nature of emotional data means that a single leak can have devastating consequences for a user’s professional life, personal relationships, and overall well-being. Unlike generic consumer data, the information shared within a mental health app—such as admissions of substance use, suicidal ideation, or interpersonal conflicts—is uniquely tied to the individual’s identity and psychological profile. When developers fail to prioritize security, they are not just risking a password; they are essentially leaving the door open to a person’s most private thoughts and vulnerabilities. This permanent digital footprint remains a liability for the user long after they have stopped using the application or even deleted their account. The lack of robust security hygiene across the industry suggests that many vendors view data protection as a secondary concern to user acquisition and feature expansion. Consequently, the burden of risk falls entirely on the shoulders of vulnerable individuals who believe they are using a secure sanctuary.

Technical Failures in Mobile Health Security

Systemic Vulnerabilities: From URI Parsing to Local Storage

The security audit identified several recurring technical themes that compromise user privacy, with many applications failing to implement the most basic isolation protocols. One of the most prevalent issues involved insecure URI parsing and intent manipulation, where apps failed to validate user-supplied strings correctly. This flaw allows an attacker to force the application to open internal activities that were never intended to be accessible from the outside, potentially granting direct access to authentication tokens and private therapy records. Furthermore, researchers discovered that many apps stored sensitive information in the local filesystem in a way that provided read access to other applications on the same device. This “snooping” capability means that a malicious app, disguised as a simple utility or game, could quietly harvest therapy notes and CBT session scores without the user ever knowing. These failures demonstrate a fundamental breakdown in secure programming practices that should be mandatory for any software handling medical information.

Hardcoded Secrets: A Roadmap for Backend Attacks

Beyond local device security, the investigation revealed that many developers left sensitive backend configurations directly within the APK resources in plaintext. This includes hardcoded Firebase database URLs and API endpoints that effectively provide a roadmap for sophisticated attackers to bypass the app entirely and target the vendor’s servers. By exposing these internal structures, developers significantly lower the barrier for mass data extraction, moving the risk from individual device compromise to large-scale organizational breaches. The presence of such elementary mistakes in high-traffic applications, some with over ten million downloads, suggests that security reviews are often bypassed in the rush to push updates. These hardcoded values are essentially master keys left under the digital doormat, rendering even the most complex user passwords irrelevant if the underlying infrastructure is accessible through these shortcuts. For a cybercriminal, these vulnerabilities represent a high-reward path to obtaining vast quantities of sensitive healthcare data.

Weak Encryption and the Illusion of Privacy

Privacy Theater: The Reality of Security Claims

Marketing materials for mental health apps frequently promise “military-grade” encryption and total privacy, yet the technical reality often paints a different and far more concerning picture. Researchers found that several developers utilized weak, predictable methods for generating encryption keys, such as the java.util.Random class, which is considered cryptographically insecure. When an app uses a predictable foundation for its encryption, the claims of safety made in the privacy policy become effectively moot, as the protection can be easily bypassed by anyone with basic technical knowledge. This phenomenon, often referred to as “privacy theater,” creates a false sense of security for users who are at their most vulnerable. When a therapy chatbot claims to keep conversations private while utilizing insecure messaging objects, it misleads the user into sharing intimate details they might otherwise keep hidden. The gap between what is promised in the interface and what is implemented in the code represents a significant ethical failure in the industry.

The Missing Safeguards: Root Detection and Sandboxing

A critical omission in the majority of the audited apps was the lack of root detection, a security feature that identifies if a device’s operating system has been compromised. On a rooted Android device, the standard security “sandboxing” that keeps applications from accessing each other’s data is effectively disabled, leaving health records exposed to any other app on the phone. Without root detection, a mental health app cannot warn the user that their sensitive data is at heightened risk or prevent the app from running in an insecure environment. Furthermore, the audit highlighted that the rapid growth of the mental health market has outpaced the implementation of necessary protocols, such as certificate pinning or robust session management. As these platforms evolve to include more complex AI-driven interactions, the lack of these foundational security measures becomes increasingly dangerous. For the user, the digital “companion” they turn to for support often lacks the basic armor required to defend their data against even common mobile threats.

Industry Stagnation and the Path Forward

The Accountability Gap: Responsiveness and Regulation

A significant concern highlighted by the research is the slow pace of security updates and the apparent stagnation in developer responsiveness within the mental health sector. As of early 2026, only a small minority of the audited apps had received recent security patches, with some platforms not seeing an update for over a year. This lack of maintenance suggests that security is often treated as an afterthought or a one-time task rather than a continuous process of defense against evolving threats. Because the vulnerabilities identified are currently in the disclosure process, specific names of the applications have been withheld to protect current users, yet the industry-wide trend is undeniable. The rapid integration of AI and more invasive data collection methods has only widened the gap between the functional utility of these apps and their safety. This suggests that without stricter regulatory oversight and mandatory security audits, the industry will continue to prioritize growth over the fundamental right to medical privacy and data integrity.

Future Safeguards: Toward a More Secure Digital Sanctuary

The investigation into the security posture of mental health applications provided a clear mandate for both developers and users to adopt more rigorous standards of digital hygiene. To address these systemic failures, developers transitioned toward mandatory third-party security audits and implemented automated vulnerability scanning as part of their standard release cycles. Users were encouraged to verify the update frequency of an app and to look for transparency reports before sharing any clinical or emotional information. Regulatory bodies also began to push for standardized certifications that differentiate between casual wellness trackers and tools intended for clinical mental health management. By acknowledging that therapy data is a permanent and highly valued asset, the industry started to move away from “privacy theater” toward genuine, verifiable technical safeguards. These steps ensured that the digital sanctuary promised to millions of people was finally built on a foundation of security that matched the sensitivity of the human emotions it was designed to support.