The transition of artificial intelligence from an experimental curiosity to a cornerstone of enterprise infrastructure has fundamentally altered the security landscape for modern executives. No longer confined to isolated research departments, AI systems now facilitate high-stakes decisions, manage critical data flows, and automate customer interactions across nearly every industrial sector. This rapid integration has created a paradox where the very tools designed to enhance efficiency also introduce profound vulnerabilities that traditional security architectures were never intended to mitigate. As organizations navigate this complex environment, the role of the security leader has expanded from technical guardian to a strategic orchestrator of risk and governance. The challenge lies in protecting systems that are increasingly autonomous, interconnected, and inherently opaque. Managing these risks requires more than just updated software; it demands a wholesale shift in how leaders perceive the intersection of identity, trust, and corporate accountability in an age defined by machine-led logic.

Rethinking Identity: Managing Non-Human Actors

The concept of identity in the corporate digital ecosystem has undergone a radical transformation, moving far beyond the management of human employee credentials. In the current operational landscape, non-human identities, such as AI agents, automated bots, and autonomous scripts, have become the primary actors within many enterprise workflows. These entities often possess the same level of access to sensitive data repositories as senior executives, yet they operate without the oversight of human judgment. Leaders must recognize that traditional identity and access management protocols, which rely on static permissions and infrequent audits, are insufficient for governing these dynamic machine actors. When an AI agent is empowered to execute financial transactions or modify database schemas, the risk of credential misuse or logic exploitation increases exponentially. Effective management requires a granular understanding of every non-human entity active on the network, ensuring that each has a clearly defined scope of authority that is strictly enforced through automated controls.

This expansion of the identity perimeter necessitates a transition toward what industry experts call runtime identity management. In this model, trust is no longer treated as a one-time verification event that occurs during a login phase but is instead a continuous evaluation process. As an AI system performs its tasks, its identity and the legitimacy of its actions are monitored in real-time to detect deviations from established behavioral norms. For instance, if an automated procurement tool suddenly attempts to access sensitive personnel files, the system should be capable of revoking its trust immediately, regardless of its initial credentials. By implementing these dynamic trust models, organizations can ensure that their security posture remains as fluid and responsive as the AI processes they seek to protect. This approach effectively closes the window of opportunity for attackers who might attempt to hijack legitimate automated processes to move laterally through a network or exfiltrate data without triggering traditional alarms.

Bridging the Governance Gap: Strategy Over Velocity

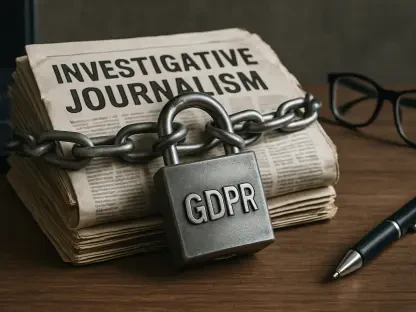

A significant tension exists between the organizational drive for rapid AI adoption and the necessity for robust governance frameworks. Many enterprises find themselves caught in a governance gap, where the velocity of technological implementation far outpaces the development of internal policies and risk management protocols. This discrepancy often leads to the emergence of shadow AI, where departments deploy unapproved third-party tools to achieve short-term productivity gains without considering the long-term security implications. Such unregulated usage can result in significant data leakage, as sensitive corporate information is fed into external models that lack adequate protection. Leaders must address this by establishing clear, transparent governance structures that provide a pathway for safe innovation rather than merely imposing restrictive bans. By centralizing the oversight of AI initiatives, organizations can ensure that every deployment aligns with the corporate risk appetite and complies with evolving international regulations.

Operationalizing AI governance requires a multidisciplinary approach that integrates security, legal, and business units into a unified decision-making body. Successful leaders are those who move beyond theoretical policy-making to create practical, scalable frameworks that provide real-world guidance for developers and end-users alike. This involves the creation of comprehensive inventories for all AI assets, rigorous testing for model bias, and clear protocols for data provenance. When governance is viewed as an enabler of growth rather than a hurdle, it allows the organization to build a foundation of trust with its stakeholders and customers. Furthermore, by embedding security-by-design principles into the early stages of the AI lifecycle, companies can avoid the substantial costs associated with remediating vulnerabilities after a system has already reached full-scale production. This strategic foresight is essential for maintaining a competitive advantage while minimizing the potential for reputational damage or regulatory fines.

Combatting Threats: Understanding Real-World Risks

The same advancements that empower enterprise productivity are also being weaponized by sophisticated malicious actors to enhance their offensive capabilities. Attackers now leverage AI to conduct highly personalized phishing campaigns at scale, generate polymorphic malware that evades signature-based detection, and automate the discovery of vulnerabilities in complex software supply chains. This shift in the threat landscape means that defenders can no longer rely solely on human-led response teams to keep pace with the speed of modern attacks. Security leaders are now tasked with overseeing a new era of cyber defense, where AI-driven security tools are used to counter AI-driven threats. This requires a fundamental shift in defensive strategy, moving away from reactive measures toward proactive threat hunting and automated incident response. By utilizing machine learning to analyze massive datasets for subtle indicators of compromise, organizations can identify and neutralize threats long before they escalate into full-scale breaches.

Beyond the threat of external attacks, leaders must also contend with the practical risks associated with the internal use of large language models and other generative technologies. There is a critical need to separate marketing hype from the actual risk profiles of these tools within a corporate setting. For example, while generative AI can significantly boost coding efficiency, it may also inadvertently introduce security flaws or use copyrighted material, leading to legal and technical liabilities. Leaders must ensure that their teams are equipped with the knowledge to evaluate these tools critically, focusing on how data is handled, stored, and protected during the interaction process. This level of scrutiny helps to demystify complex technologies and allows for the implementation of specific, targeted controls that address actual vulnerabilities. By grounding their security strategies in a realistic assessment of tool performance and risk, executives can provide their organizations with the clarity needed to navigate a volatile digital environment.

Building Resilience: The Path to Enterprise Stability

The path toward long-term enterprise resilience in the age of artificial intelligence is paved with strategic investment in human capital and cross-functional collaboration. Managing AI risk is not a task that can be relegated to the IT department alone; it requires a collective commitment from the highest levels of leadership down to the individual contributor. We are seeing the rise of specialized roles, such as the Chief AI Officer, which signals a broader trend toward professionalizing AI oversight. These leaders serve as the bridge between technical execution and business strategy, ensuring that AI initiatives remain ethical and secure. By fostering a culture of continuous learning, organizations can ensure that their workforce remains capable of identifying and mitigating the unique challenges posed by autonomous systems. This educational resilience is vital, as the rapid pace of technological change means that static security rules are often obsolete within months of their implementation.

To secure a stable future, organizations must prioritize a roadmap that emphasizes visibility, dynamic governance, and active defense. The initial step involved gaining a comprehensive view of how AI was utilized across the entire enterprise, including identifying every human and non-human entity interacting with corporate systems. Once this visibility was established, leaders implemented flexible governance frameworks that adjusted to the speed of the business while maintaining strict adherence to safety standards. The final phase focused on deploying active, AI-powered defenses to provide a continuous cycle of monitoring and rapid response. These steps allowed forward-thinking companies to transform their security posture from a defensive necessity into a strategic asset. By focusing on actionable insights and fostering a collaborative environment, executives successfully navigated the transition into an AI-integrated economy. This proactive approach ensured that organizations remained secure, compliant, and prepared to capitalize on the transformative potential of artificial intelligence while minimizing the inherent risks.