The transition from conversational chatbots to autonomous AI agents has fundamentally altered the digital landscape by introducing a complex and expanded attack surface that legacy security protocols struggle to address effectively. These agentic systems are no longer passive recipients of prompts; they possess the capability to navigate the web, interact within multi-agent swarms, and manipulate sensitive internal data without constant human intervention. This shift creates a vacuum where traditional perimeter defenses and user-based permissions fail to account for the velocity and autonomy of machine-led actions. To mitigate these risks, Microsoft has integrated a multi-layered security framework into its core ecosystem, combining identity management with automated defense mechanisms. By leveraging tools like Azure AI Foundry and Microsoft Entra ID, the organization seeks to establish a standardized environment where autonomous activity is not only visible but also strictly governed.

Redefining Identity Management: Securing Nonhuman Entities

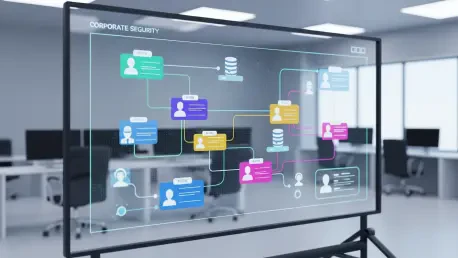

A critical vulnerability in modern enterprise environments stems from the lack of visibility into nonhuman identities, leaving security teams uncertain about which autonomous entities are accessing specific resources. Microsoft addresses this by extending the capabilities of Entra ID to include a dedicated agent registry, ensuring every AI entity receives a unique, trackable identity that functions much like a digital passport. This metadata-rich structure allows the system to distinguish between an agent acting on its own initiative and one operating on behalf of a specific human user, providing much-needed clarity for auditing. By treating these agents as first-class citizens within the corporate directory, administrators can apply the same rigorous standards of verification used for human employees. This foundation ensures that every action taken by a machine is attributed to a specific, authenticated identity, effectively eliminating the anonymity that often leads to security breaches.

Establishing these distinct identities enables organizations to enforce the principle of least privilege with a level of precision that was previously unattainable for autonomous software components. Security teams can now define exactly which databases, applications, and network segments an agent is permitted to touch, ensuring that its access is limited to the bare minimum required for its designated task. This transformation turns what used to be a shadowy background process into a highly manageable asset with a comprehensive behavioral log that can be monitored for any signs of deviation. If an agent attempts to access sensitive payroll data while assigned to a marketing research task, the identity-based system can automatically trigger an alert or revoke access immediately. Furthermore, this transparency allows for a more detailed analysis of how autonomous agents interact with one another, preventing the formation of unregulated “shadow swarms” that could potentially exfiltrate data.

Implementing Proactive Guardrails: Protection via Azure AI Foundry

Beyond the foundational layer of identity, Microsoft has introduced sophisticated guardrails within the Azure AI Foundry platform to act as a proactive defensive perimeter for agentic deployments. These guardrails consist of predefined collections of administrative and operational controls that are assigned directly to specific AI models, creating a sandbox environment that limits the agent’s potential impact. By establishing these boundaries, companies can effectively prevent their agents from straying into prohibited data sets or engaging in high-risk behaviors that might jeopardize the broader network. This systematic approach allows security operations centers to evaluate the “blast radius” of an agent before it is ever connected to live production data. The primary objective is to move away from manually reviewing every action and instead implement a policy-driven architecture where the AI platform itself enforces safety standards, ensuring that autonomy never comes at the expense of security.

These proactive measures also include a robust flagging system designed to identify agents with “risky capabilities” during the development and testing phases of their lifecycle. For example, if an agent is designed with the ability to modify system configurations or execute code, the Azure AI Foundry platform highlights these permissions for additional scrutiny by human supervisors. This data-driven method for managing risk provides organizations with the necessary tools to curb autonomous sprawl while still reaping the productivity benefits of AI integration. By incorporating these administrative controls, Microsoft enables a shift toward “secure by design” principles, where the safety of an agent is verified long before it encounters a real-world threat. This structured oversight is essential for maintaining a stable environment, as it allows organizations to scale their AI initiatives without losing control over the complex web of interactions that define the modern agentic ecosystem.

Leveraging AI-Driven Defense: The Role of Security Copilot

As the speed of machine-driven attacks continues to accelerate, human intervention alone is often insufficient to mitigate threats in real-time, leading Microsoft to deploy specialized agents within Security Copilot. This “AI versus AI” strategy employs tools like the Security Triage Agent and the Security Analyst Agent to monitor, analyze, and respond to incidents with a level of efficiency that manual processes cannot match. The Triage Agent works by synthesizing vast amounts of telemetry data from across the enterprise, filtering out noise and presenting human responders with a prioritized list of critical events. This automation significantly reduces the “mean time to respond” by ensuring that security professionals are not bogged down by low-level alerts. By utilizing the new identity registry to track agentic behavior, these defensive tools can quickly identify when a malicious agent is attempting to masquerade as a legitimate internal process, providing a rapid response that is essential for modern cyber defense.

Furthermore, the Security Analyst Agent is designed to perform deep, multi-step investigations that reconstruct complex attack paths across an organization’s entire infrastructure. It pulls data from disparate sources like Microsoft Defender and Sentinel, piecing together the timeline of a breach with high precision to identify vulnerabilities that might have otherwise remained hidden. This capability is complemented by Posture Agents, which continuously evaluate the overall data security configuration of the network and provide actionable recommendations for hardening the environment. Together, these intelligent entities create a layered defense-in-depth architecture where autonomous systems are under constant supervision by other specialized AI systems. This circular model of governance ensures that even as agents become more capable and independent, they are always operating within a supervised framework that prioritizes the integrity and confidentiality of the organization’s digital assets.

Evolving Toward a Zero Trust AI Framework: Continuous Safeguarding

The transition toward a world dominated by agentic AI has necessitated a significant evolution of the Zero Trust security model, with Microsoft adding a dedicated AI pillar to its strategic workshops. This change reflects an industry-wide consensus that identity must serve as the primary defensive perimeter, especially when dealing with software that acts with a high degree of autonomy. The goal of this updated framework is to transition from a reactive posture—where teams respond to breaches after they occur—to a model of continuous, automated safeguarding that anticipates potential points of failure. As technology continues to advance with the emergence of agentic browsers and multi-agent swarms, the security infrastructure must remain remarkably agile to keep pace. This requires a shift in mindset where every interaction, whether initiated by a human or a machine, is treated with the same level of skepticism and undergoes rigorous verification before access is granted to sensitive corporate data.

In the end, the path toward securing the agentic attack surface required a fundamental reassessment of how autonomy and authority were distributed across the enterprise. Organizations that successfully navigated this transition did so by integrating identity management directly into their AI development pipelines, ensuring that no agent operated without a clear and verified mandate. The implementation of automated guardrails and AI-driven defense mechanisms allowed these companies to mitigate risks while still embracing the efficiency gains offered by autonomous systems. Moving forward, the focus shifted toward building a more transparent ecosystem where the actions of every nonhuman entity were fully auditable and aligned with corporate safety standards. By treating AI security as a core business function rather than a technical afterthought, leadership teams secured their digital infrastructure against the next generation of threats while laying the groundwork for a more resilient and automated operational future.