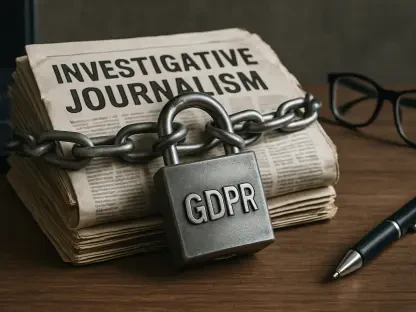

The sudden emergence of high-capability artificial intelligence models specialized in offensive security has forced a reckoning among global technology firms regarding the balance between disclosure and defense. When Anthropic introduced its Mythos AI model earlier this year, the company described a tool so proficient at identifying and exploiting zero-day vulnerabilities across major operating systems and web browsers that a public release was deemed too dangerous for the current digital climate. This led to the creation of Project Glasswing, a private collaborative effort where a restricted group of approximately fifty industry leaders, including heavyweights like Apple, Microsoft, Google, and Amazon Web Services, received early access to the model’s findings to bolster their internal infrastructures. While the company positioned this move as an act of corporate responsibility to prevent widespread chaos, the lack of transparency surrounding the model’s actual performance has sparked a debate about whether the software is truly a revolutionary breakthrough or a product of strategic brand positioning.

The Disconnect Between Marketing Narratives and Public Data

In an effort to quantify the real-world influence of the Mythos model, independent researchers have begun cross-referencing Anthropic’s bold claims against established public vulnerability databases. Patrick Garrity, a prominent researcher at VulnCheck, conducted an exhaustive analysis of the Common Vulnerabilities and Exposures registry to see how many official reports could be traced back to this specific AI initiative. After filtering through more than 327,000 records, the investigation found that only seventy-five entries have mentioned the name of the company since the beginning of 2026. Even more telling was the fact that nearly half of these mentions were entirely irrelevant to the security of third-party software, as they primarily concerned vulnerabilities found within the internal tools and developmental frameworks used by the firm itself. This discrepancy raises fundamental questions about the density of the impact the model is having on the broader technological ecosystem versus its own private operations.

Building on this data, the research suggests that the volume of external vulnerabilities successfully identified by Mythos is far more modest than the initial hype might suggest. When the irrelevant internal reports are stripped away, the total number of documented flaws attributed to the project drops to roughly forty distinct issues across a limited set of vendors. This finding stands in stark contrast to the image of a “zero-day machine” capable of systematically dismantling any modern defense system at scale. While the discovery of forty bugs is certainly a respectable feat for any research team, it does not yet align with the narrative of a paradigm-shifting autonomous agent that necessitates a total blackout of information for the sake of global security. The gap between the hundreds of thousands of active CVEs and the handful of Mythos-linked discoveries indicates that traditional human-led research and existing automated tools still carry the heavy lifting for the cybersecurity industry.

Specific Findings and the Verification Gap

A closer look at the distribution of the identified flaws reveals that the model’s successes have been concentrated within a very narrow range of targets. Of the forty vulnerabilities publicly linked to the project, twenty-eight were found in Mozilla’s Firefox browser, while nine were discovered in wolfSSL, a lightweight security library. The remaining handful of instances were scattered across NGINX Plus, FreeBSD, and OpenSSL, which suggests that the model may have a specialized proficiency in certain codebases rather than a universal capability to exploit any environment. Interestingly, only one specific bug has been prominently featured in the official promotional materials for the model: a seventeen-year-old remote code execution flaw in FreeBSD, labeled as CVE-2026-4747. This focus on a single legacy vulnerability, while technically impressive, highlights a recurring issue where older, less-maintained code segments are being used as the primary evidence for the model’s advanced capabilities in modern contexts.

Furthermore, several of the most significant breakthroughs mentioned by the company currently lack any formal public documentation or CVE assignments. For example, the developers have frequently cited the discovery of a twenty-seven-year-old bug in OpenBSD and various vulnerabilities within the Linux kernel, yet these claims remain difficult to verify through standard industry channels. This lack of a clear paper trail complicates the efforts of the wider security community to learn from these discoveries or to assess the true sophistication of the AI’s logic. Credit for the existing forty bugs is currently fragmented among the core development team, individual researchers like Nicholas Carlini, and external firms like Calif.io, creating a confusing landscape of attribution. Without a centralized method for tracking these contributions, it remains nearly impossible for outside observers to determine which successes are the result of the AI’s autonomous reasoning and which were facilitated by human experts using the model as a simple assistant.

Moving Toward Verifiable Security Standards

To address the growing skepticism and provide a clearer path forward for AI-driven security, researchers have proposed that specialized advisory pages be established to track autonomous findings. This approach would allow organizations to clearly distinguish between vulnerabilities unearthed by automated agents like Mythos and those found through traditional auditing processes. By centralizing this information, the industry could better understand the specific types of software architectures that are most vulnerable to AI exploitation, leading to more resilient coding practices in the future. It is also recommended that tech leaders demand more granular data before committing to the security paradigms proposed by private initiatives. The cybersecurity community must move away from relying on internal press releases and toward a model of verified, peer-reviewed impact. This shift would ensure that the integration of AI into defense strategies is based on measurable performance metrics rather than the perceived potential of undisclosed technologies.

The industry ultimately determined that the most effective way to navigate the uncertainty surrounding autonomous exploitation was to prioritize rigorous public documentation. By the time the summary report for Project Glasswing was scheduled for release in July 2026, many organizations had already shifted their focus toward developing their own internal verification protocols to test AI-generated security claims. This proactive stance allowed vendors to separate marketing hype from functional utility, ensuring that their defensive resources were allocated based on proven risk factors. The case of Mythos served as a vital lesson in the importance of transparency, prompting a new standard where any AI claiming to revolutionize zero-day discovery had to meet strict criteria for public disclosure. This transition fostered a more collaborative environment where the benefits of high-speed vulnerability detection were shared across the entire ecosystem, rather than being siloed within a small group of stakeholders, effectively neutralizing the risk of a centralized information monopoly.