Rupert Marais has spent years hardening endpoints, corralling unruly networks, and steering incident response through late‑night crises. In this conversation, he unpacks how four newly exploited flaws across SimpleHelp, Samsung MagicINFO 9 Server, and D-Link DIR-823X bend patching priorities, force tough replacement timelines, and reshape defenses from API keys to zip pipelines. He shares field-tested controls, fast triage methods, and how to turn KEV pressure into disciplined milestones, all while keeping one eye on Mirai noise and another on ransomware dwell.

Four exploited flaws just entered the KEV list, spanning SimpleHelp, Samsung MagicINFO 9 Server, and D-Link DIR-823X routers—how should teams triage across CVSS, exploitability, and business exposure, and what metrics or scoring tweaks have actually shifted your patch order in practice?

I start with three pivots: CVSS, verified exploitation, and asset criticality. A 9.9 on SimpleHelp with active abuse beats an 8.8 on a lab box every time. I weight “internet‑exposed” by 2x and “credentialed access required” by 0.6, which often flips queue order. When the KEV tag lands and the service is customer‑facing, it goes red, even if the score is 7.2.

With a federal deadline of May 8, 2026 to fix or retire affected systems, how do you plan long-lead replacements, budget approvals, and vendor coordination, and what milestones or checkpoints keep agencies from slipping?

I anchor plans to quarterly gates: inventory lock, funding commit, contract award, cutover. Long‑lead hardware gets ordered by the second quarter to beat slippage. Vendors get a dated runbook and a rollback by week one of staging. A monthly checkpoint shows burn‑down to May 8, 2026, with red flags at 10% drift.

SimpleHelp CVE-2024-57726 enables low-privileged technicians to mint overly permissive API keys; how would you detect this abuse in logs, constrain key scopes, and rotate secrets at scale without breaking services?

I hunt for sudden key creation bursts and scope jumps to admin in minutes. Access logs should show the actor, target scope, and client IP for correlation. Scopes map to least‑privilege roles, and admin scopes require break‑glass approval. Rotation runs in waves with canary services, and any 401 spike rolls back within five minutes.

For SimpleHelp CVE-2024-57728 (zip slip), what hardening steps—sandboxing, file path normalization, and allowlists—actually reduced risk in your environment, and how did you validate them with red-team or regression tests?

We extract to a temp jail as a non‑root user with a quota. Canonical path checks drop any “../” or absolute paths before write. Only allowlisted extensions and target directories survive. Red team fed crafted zips; success meant nothing escaped the jail and regression tests stayed green.

Samsung MagicINFO 9 Server path traversal (CVE-2024-7399) allows arbitrary file writes as system authority; which EDR rules, file integrity monitors, or syscall blocks catch this reliably, and what false-positive tuning did you need?

File monitors watch system paths for web‑process writes with a deny‑by‑default stance. EDR flags unexpected create/write by the MagicINFO service outside its data tree. We block dangerous syscalls to sensitive dirs and hash baseline configs hourly. Tuning whitelisted scheduled updates and dropped noise from backups.

D-Link DIR-823X command injection (CVE-2025-29635) hits end-of-life gear; how do you execute a decommission plan—asset discovery, segmentation, temporary controls, and replacement—while tracking risk burn-down weekly?

Start with discovery and tag every DIR‑823X by site and owner. Move them to a quarantine VLAN with ACLs and no outbound admin traffic. Stand up temp controls at the edge and fast‑track replacements. Each week, we report remaining count, blocked hits to /goform/set_prohibiting, and expected retire dates.

Ransomware operators reportedly used the SimpleHelp flaws as precursors; what pre-ransom “dwell” indicators have you seen—new admin API keys, lateral SMB traffic, or backup tampering—and how do you sequence containment without tipping off attackers?

I’ve seen quiet admin key creation followed by small, odd SMB probes. Backups show test restores failing, then policy edits. We isolate by segment first, then disable suspect keys, and finally reset creds. The goal is to choke access without the noisy ripcord that triggers detonation.

Mirai variants have targeted both MagicINFO and D-Link devices; what network baselines, rate limits, and egress controls disrupt botnet callbacks, and can you share packet-level signatures or heuristics that worked?

Baselines flag sudden bursts of tiny TCP flows from these devices. We rate‑limit outbound to new IPs and block uncommon ports by default. DNS egress is forced through a resolver with denylists. Heuristics: repetitive short HTTP posts with identical headers, and unusual keep‑alive patterns from routers.

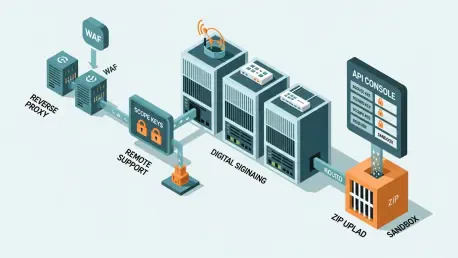

When patch windows are scarce, how do you stack compensating controls—WAF rules, reverse proxies, RBAC tightening, and service isolation—and which control gave the biggest measured risk reduction per hour of effort?

I front services with a reverse proxy enforcing strict paths and verbs. WAF blocks traversal and upload anomalies immediately. RBAC trimming removes dormant admin roles that attackers love. The proxy earned the best risk drop per hour because we shipped rules within a day.

Many orgs lack reliable SBOMs for remote support tools; how do you build an asset inventory that maps versions to CVEs, and what automated reconciliations or discovery scans finally made it trustworthy?

We tie CMDB entries to scanner fingerprints and the management plane. Versions map to CVEs, including 9.9, 8.8, 7.5, and 7.2. Nightly reconciliations merge API pulls with authenticated scans. Any mismatch opens a ticket and blocks change approvals until fixed.

For privilege escalation via mis-scoped API keys, what least-privilege template would you deploy, how would you enforce key lifetimes and rotation, and how do you test for permission creep over time?

Templates allow read‑only by default, with write split by domain. Admin scopes require time‑boxed keys with expiration in hours. Rotation is automated and staggered, with alerting on keys near expiry. Quarterly drift tests use synthetic users to prove denied actions stay denied.

Zip-based uploads are ubiquitous; what secure upload pipeline—MIME checks, unzip-to-temp, canonical path checks, and non-root execution—have you implemented, and how do you continuously fuzz it?

We gate on MIME sniffing plus extension checks. Unzips land in temp, non‑root, with disk and file count caps. Canonical checks reject traversal and absolute paths. A fuzz job mutates archives daily and flags any extraction that touches disallowed trees.

Agencies must prove KEV compliance; what evidence packages—ticket trails, patch proofs, config snapshots, and network scans—satisfy auditors, and how do you automate collection to avoid quarter-end scrambles?

The package includes change tickets, before/after scans, and dated screenshots. We add config diffs, service hashes, and KEV references. A pipeline gathers artifacts on patch close and stores them immutably. Audits become exports, not scavenger hunts.

For legacy routers you can’t replace immediately, how would you harden them—strict ACLs, management plane isolation, read-only configs, and outbound blocks—and what monitoring hooks expose command-injection attempts?

Apply ACLs that only permit known management IPs. Isolate the plane and set configs to read‑only except during approved windows. Block outbound except to known update hosts. Monitor for POSTs to /goform/set_prohibiting and sudden config writes.

Incident response playbooks often skip vendor-specific steps; how would you tailor playbooks for SimpleHelp, MagicINFO, and SOHO routers, and what step-by-step actions shorten mean time to contain?

Include exact service stops, log paths, and API endpoints per product. For SimpleHelp, revoke suspect keys first, then rotate creds. For MagicINFO, lock file writes and restore known‑good configs. For routers, pull from the edge, push temp ACLs, and replace fast.

Threat intel tied these CVEs to Mirai and ransomware; how do you translate that into actionable detections—YARA, Sigma, or behavioral analytics—and what feedback loop do you use to prune noisy alerts?

We ship Sigma for traversal, zip anomalies, and odd admin key events. YARA covers dropped binaries and signature overlap with Mirai variants. Behavioral models watch outbound patterns and privilege jumps. Weekly reviews cull noisy rules and promote high‑fidelity hits.

What is your forecast for exploited enterprise software and IoT vulnerabilities over the next 24 months, and how should defenders rebalance investments between patch velocity, network isolation, and identity controls?

Expect steady exploitation of remote tools and SOHO gear, mirroring the four KEV additions. Patch fast where you can, but invest equally in isolation and identity guardrails. Network walls blunt Mirai‑style noise; identity stops quiet privilege climbs. Balance all three, and tie progress to dated milestones before May 8, 2026.