The velocity at which artificial intelligence has transformed from a curious collection of chatbots into the primary engine of global financial markets and logistics management is nothing short of unprecedented in the history of industrial computing. Organizations that once viewed these systems as mere productivity enhancers now find themselves reliant on them for core security, decision-making, and structural continuity. Consequently, the conversation has moved away from mere capability toward the more urgent matter of control. Without a transparent foundation, the very technology intended to accelerate growth can quickly become an unmanageable liability that compromises an entire corporate ecosystem.

As these autonomous systems settle into the bedrock of commerce, the internal mechanics of how they operate are no longer just a concern for data scientists; they are a priority for the boardroom. The shift toward open infrastructure represents a fundamental realization that opaque, proprietary systems introduce systemic risks that are difficult to mitigate. When the internal logic of a model is hidden, the ability to govern that model—to ensure it follows legal mandates, ethical guidelines, and operational protocols—is severely diminished. True governance requires more than just oversight; it requires the ability to inspect, modify, and audit the foundational layers of the technology itself.

The Shift: From Experimental Novelty to Corporate Backbone

Artificial Intelligence has officially graduated from the “experimental product” phase to become a core pillar of enterprise infrastructure. In the earlier years of AI development, businesses were content to play with standalone tools that offered niche benefits, such as drafting emails or generating marketing copy. However, as of today, AI has become the foundational plumbing of global commerce, integrated into everything from fraud detection in banking to real-time supply chain adjustments. This evolution changes the rules of engagement for every business leader. The transition means that the stakes have shifted from simply exploring what AI can do to ensuring that these capabilities do not become a massive operational liability.

The move toward open infrastructure is a strategic response to the reality that gated, black-box models often create more friction than they resolve in a corporate setting. When a technology is peripheral, the risks of being locked into a single vendor’s proprietary ecosystem are manageable. But when that technology becomes the backbone of the enterprise, reliance on a proprietary system creates a single point of failure that can disrupt entire business lines. Modern leaders are realizing that for AI to function as reliable infrastructure, it must be built on standards that prioritize interoperability and transparency rather than vendor-controlled secrecy.

Furthermore, the maturity of these systems necessitates a move away from the “move fast and break things” mentality that characterized early AI deployment. In a governed enterprise environment, reliability and predictability are far more valuable than the latest experimental feature. Open infrastructure allows organizations to stabilize their operations by choosing models that are well-understood and thoroughly tested by a global community. This transition ensures that the enterprise maintains control over its own destiny, rather than being subject to the sudden changes in pricing, availability, or logic that often occur within proprietary AI ecosystems.

Understanding the Lifecycle of Software Maturation: Its Risks

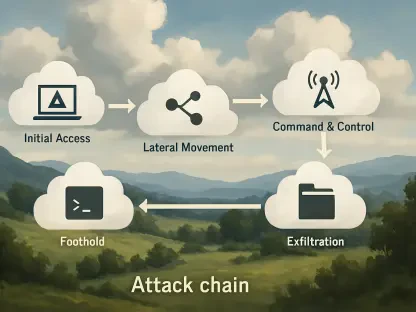

History provides a clear template for how software evolves, typically moving through three distinct stages: a standalone product, an integrated platform, and finally, foundational infrastructure. In the early “product” stage, closed, “walled garden” approaches often work well because they allow developers to control the user experience and iterate quickly. However, once a technology reaches the infrastructure stage, these closed systems become a systemic risk. When technology is embedded in high-stakes operations such as network security and automated decision-making, the inability to see inside the system creates what is known as “infrastructure drag.”

As AI becomes the connective tissue of the enterprise, relying on black-box models creates a concentrated point of failure that no traditional governance framework can easily solve. If the underlying logic of a decision-making model is inaccessible, the organization cannot truly be held accountable for the outcomes that model produces. This lack of transparency is a significant hurdle for industries under heavy regulatory scrutiny, such as healthcare or finance. Governance must be built on the bedrock of transparency; without the ability to inspect the internal weights and biases of a model, the enterprise is effectively flying blind.

The risks associated with proprietary infrastructure extend beyond regulatory compliance to the very heart of operational stability. When a proprietary vendor updates a model, the downstream effects on an enterprise’s custom-built applications can be unpredictable and destructive. This instability is unacceptable for foundational systems. Open infrastructure mitigates this risk by providing a stable, inspectable base that does not change without the organization’s consent. By moving to open standards, companies ensure that their critical infrastructure remains under their own jurisdiction, protected from the volatility of the external software market.

Deconstructing the Pillars: Open Infrastructure for Margin Protection

The move to open-source AI foundations provides three distinct advantages that directly influence an enterprise’s bottom line, starting with the resolution of the “diagnostic bottleneck.” In complex software environments, technical teams must be able to quickly distinguish between errors caused by poor data retrieval and internal model hallucinations. With gated systems, this process is nearly impossible because the internal mechanics are hidden. Open infrastructure allows engineers to peer into the model’s processing layers, drastically reducing the time and labor costs associated with troubleshooting and maintaining AI-driven applications.

Another critical pillar of margin protection involves addressing the growing concerns regarding data privacy and latency. For many enterprises, the process of anonymizing sensitive data to satisfy the requirements of external API calls is both time-consuming and expensive. By running open models locally or within private cloud environments, companies can bypass these hurdles entirely. This localized approach not only secures sensitive intellectual property but also reduces the latency that often plagues cloud-based proprietary models. Faster response times and lower data-handling costs translate directly into improved operational efficiency and higher margins.

Finally, open infrastructure prevents the erosion of margins by allowing network engineers to accurately size hardware deployments. In a proprietary model, the compute requirements are often a moving target, leading companies to pay premium rates for high-compute queries that may not be necessary for simple tasks. Open-source models allow for better orchestration, where simple tasks can be routed to smaller, cost-effective models while reserving expensive resources for high-complexity queries. This granular control over compute allocation ensures that the organization is not over-provisioning its hardware or overpaying for API access, keeping the cost of innovation manageable.

Expert Insights: Security and the Fallacy of Gated Capabilities

Industry veterans, including IBM’s Rob Thomas, argue that the most effective way to harden AI infrastructure is through broad, external scrutiny rather than concealment. The emergence of autonomous models capable of identifying software vulnerabilities—such as the findings related to the “Claude Mythos” experiments—highlights a significant paradox. The more powerful an AI model becomes, the more dangerous it is to keep its inner workings hidden from the defenders who are using it. In an era where attackers can use AI to find exploits, defenders need every advantage possible to understand how those exploits are being generated and how to stop them.

The concept of “security through obscurity” is a failing strategy at the scale of global enterprise infrastructure. When a model’s logic is hidden, the vendor is the only entity capable of fixing flaws, creating a bottleneck that attackers can exploit. In contrast, open infrastructure benefits from a global pool of researchers and developers who can identify logic flaws and refine security protocols much faster than any single vendor’s internal team could ever hope to. By adopting open standards, enterprises are essentially crowdsourcing their security efforts, ensuring that their defenses are constantly being tested and improved by the best minds in the field.

Furthermore, expert consensus suggests that the most resilient systems are those that are built to be inspected. When a model is open, the community can develop specialized security wrappers and monitoring tools that are tailored to specific industry needs. This ecosystem of third-party security tools simply cannot exist for closed models in the same way. The ability to verify the integrity of a model’s training data and the safety of its output is a non-negotiable requirement for any organization that handles sensitive information. Transparency is not just a matter of ethics; it is the most practical path toward creating a secure digital environment.

Strategies: Transitioning to a Governed AI Orchestration Layer

To implement a robust governance framework, enterprise leaders should adopt a strategy of decoupling the application layer from the underlying foundation model. This approach involves prioritizing “orchestration agility,” where the organization develops the internal capability to swap models based on specific workload requirements, cost constraints, or privacy mandates. By not tying the enterprise’s software to a single proprietary API, leaders ensure that their applications remain functional even if a specific model provider fails or changes its terms. This decoupling is the first step toward true technological independence and long-term stability.

Leaders must also treat transparency as a non-negotiable design requirement for any AI integration. This means ensuring that any AI integrated into core operations is subject to continuous inspection and that there are clear protocols for auditing the model’s performance. Building a diverse ecosystem that includes inputs from researchers, startups, and internal developers allows a company to turn the potential risks of autonomous AI into a sustainable advantage. This diversity ensures that the governance framework is not one-dimensional but is instead capable of addressing the multifaceted challenges of modern AI deployment, from ethical bias to technical reliability.

Finally, the transition to a governed AI orchestration layer requires a cultural shift within the IT department. Teams must move away from being mere consumers of external AI services to becoming active orchestrators of internal AI resources. This involves investing in the talent and infrastructure necessary to host, tune, and monitor open-source models. While this may require an initial upfront investment, the long-term payoff is a more resilient, cost-effective, and transparent infrastructure. By taking control of the orchestration layer, the enterprise ensures that it remains the final authority on how AI is used to serve its customers and protect its interests.

The journey toward open AI infrastructure was defined by a shift from closed-loop systems to a more transparent and collaborative framework. Enterprises realized that the risks of opaque models far outweighed the temporary convenience of quick-fix proprietary solutions. To address these challenges, organizations prioritized the decoupling of their software layers and invested in the technical talent required to manage their own model orchestration. This strategic move allowed companies to maintain higher margins while simultaneously strengthening their security posture against increasingly sophisticated digital threats. By embracing transparency as a core architectural requirement, the modern enterprise successfully turned the volatility of the AI revolution into a governed and sustainable competitive advantage. Practical steps were taken to ensure that every automated decision could be audited, every compute query was optimized for cost, and every piece of sensitive data remained within a protected, private environment.