The silent background processes that maintain modern software have fundamentally transformed the traditional concept of a perimeter, turning every installed application into a potential gateway for external code. In the current landscape of 2026, software is no longer a static entity that sits dormant after installation; instead, it functions as a dynamic service that continuously evolves through autonomous background activities. This shift is primarily driven by a mechanism known as “runtime fetch,” which empowers applications to pull updates, plugins, and dependency refreshes directly from the internet without human intervention. While this model offers undeniable convenience for individual consumers seeking to stay current with the latest security patches, it presents a profound structural vulnerability within the enterprise environment. By allowing these autonomous connections, organizations essentially permit a standing execution path to exist between external, often third-party infrastructure and the very heart of their secure internal networks, effectively bypassing the manual vetting processes that were once standard for new code deployment.

The Mechanics of Runtime Fetch and Hidden Execution Paths

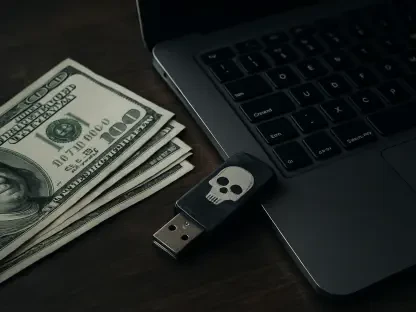

Standard security architectures rely heavily on the ability to distinguish between authorized and unauthorized traffic, yet the rise of automated updates has blurred these lines significantly. When an application initiates a runtime fetch to update its components, modern security tools like firewalls and Endpoint Detection and Response (EDR) systems typically classify this traffic as expected behavior. This classification occurs because the software itself is already signed, trusted, and integrated into the system’s daily operations. Consequently, the background download and execution of new scripts or binaries often evade the rigorous inspection and staging protocols that govern other types of network activity. This creates a persistent blind spot where the primary risk does not necessarily originate from a flaw in the application’s source code, but rather from the inherent trust placed in the delivery mechanism. This standing execution path remains open indefinitely, providing a pre-approved channel that threat actors can potentially exploit to deliver malicious payloads under the guise of routine maintenance.

In a professional setting, the problem of scale exacerbates the risks associated with these independent update channels. An enterprise network rarely consists of just a few applications; it is a complex ecosystem of hundreds or even thousands of software titles, many of which possess their own unique auto-update behaviors. This results in a massive layered exposure of persistent external execution channels that security teams find nearly impossible to monitor comprehensively. The traditional notion of a secure perimeter becomes obsolete when dozens of different vendors are effectively given a “key” to execute code on internal machines at any time. Moreover, the focus of cybersecurity must shift from simply patching vulnerabilities to managing the authority of the applications themselves. When software is permitted to rewrite its own binaries without oversight, the organization cedes control over its technical integrity. This lack of visibility means that a compromise in the vendor’s infrastructure could lead to an immediate, widespread breach across the enterprise before any alarms are triggered by conventional defense systems.

Lessons from the Notepad++ Breach: Exploiting the Delivery Chain

The theoretical dangers of automated updates were realized during a significant incident involving the popular text editor Notepad++, which served as a stark warning for the global tech community. In this specific scenario, the attackers did not focus their efforts on finding a zero-day vulnerability within the application’s code or utilizing complex phishing campaigns to gain entry. Instead, they successfully targeted and compromised the hosting environment responsible for delivering update content to millions of users. By replacing legitimate update binaries with malicious versions, the threat actors effectively hijacked a trusted delivery chain to distribute malware. This incident highlighted a critical shift in the modern threat landscape, demonstrating that if an attacker can compromise the delivery platform, they no longer need to bypass local security defenses. The software’s own update mechanism becomes the delivery vehicle for the attack, turning a routine security practice into a direct path for network infiltration that is both efficient and difficult to detect through standard monitoring tools.

The fallout from such breaches underscores a systemic issue where any software capable of self-updating becomes a potential liability, regardless of its primary function. From web browsers and administrative tools to VPN clients and specialized engineering software, the reliance on independent update mechanisms creates an unmanageable attack surface. When an organization allows these programs to update autonomously, it effectively delegates its security posture to the operational integrity of every third-party vendor in its inventory. This delegation is particularly dangerous because it assumes that every vendor maintains world-class security over their entire infrastructure, including their content delivery networks (CDNs), domain name systems (DNS), and certificate management processes. As illustrated by recent trends, the vulnerability often lies in these secondary services rather than the vendor’s primary development environment. Consequently, the enterprise is forced to trust a vast, invisible web of interconnected services, any one of which could be the weak link that allows a malicious actor to inject code into the corporate network.

The Failure of Vendor Trust: Moving Toward Controlled Deployment

Relying on vendor trust as a primary security strategy has proven to be an increasingly fragile approach in the face of sophisticated supply chain attacks. Many IT administrators operate under the assumption that their security posture is sound because they only install software from reputable, well-known developers. However, this perspective is dangerously narrow because it fails to account for the entire lifecycle of the software delivery process. To truly trust an automated update, an organization must maintain confidence in the vendor’s DNS providers, hosting platforms, and third-party libraries for an indefinite period. In the modern interconnected economy, even the most reputable companies can suffer from a compromise in their broader ecosystem. Furthermore, a single compromised endpoint in an enterprise environment is never an isolated event; it typically provides the necessary foothold for threat actors to move laterally through shared identity and access management systems. By allowing unvetted updates to run on even a few machines, an organization risks the integrity of its entire network, making the “trust but verify” model more relevant than ever.

To mitigate these risks, organizations must transition from a model of inherited trust to a framework of controlled trust, facilitated by centralized patch management platforms. This strategic shift involves disabling the internal auto-update mechanisms of individual applications and consolidating all update activities under a single, administrative umbrella. By stripping execution authority away from the applications themselves, IT teams can reclaim control over what code enters their environment and when it is deployed. This approach provides several key advantages, including the ability to test and stage updates in a sandbox environment before they reach critical systems. Centralization also ensures version consistency across the entire network, eliminating the “drift” that occurs when different machines run different software versions. Most importantly, it drastically reduces the number of external execution paths that security teams must monitor. Instead of managing dozens of independent background fetches, the organization focuses on a single, verified stream of updates, thereby reinforcing the overall resilience of the corporate infrastructure.

The analysis of automated update vulnerabilities demonstrated that the convenience of background processes often came at a direct cost to organizational visibility and control. In light of these findings, security professionals recognized that the “runtime fetch” model established a permanent bridge for external threats that bypassed traditional defensive perimeters. To counter this, many leading organizations began implementing a zero-trust approach to software maintenance, where no application was permitted to modify itself without explicit administrative approval. They successfully deployed centralized patch management systems to serve as a necessary buffer, transforming silent, autonomous execution into a deliberate and verified workflow. These institutions also prioritized the auditing of their software inventories to identify and disable hidden update channels in non-essential tools. Moving forward, the industry adopted a standard of “vetted deployment,” ensuring that every byte of new code was inspected and staged before reaching production environments. This shift proved essential in neutralizing the risks of delivery chain hijacking while maintaining the benefits of a patched and up-to-date software ecosystem.