The sudden realization that an artificial intelligence agent has altered your professional documentation without permission represents a fundamental shift in the relationship between humans and their coding environments. In the current landscape of 2026, AI-assisted coding has moved far beyond simple autocomplete features to become a core component of the modern DevOps pipeline. These tools now manage everything from unit testing to repository maintenance, positioning GitHub as the essential nexus where open-source passion meets corporate efficiency.

Microsoft and other industry leaders have integrated large language models into every facet of the developer experience, attempting to monetize the massive productivity gains these systems offer. However, this aggressive integration has created a friction point regarding the preservation of developer trust. As platforms look for ways to recoup the high costs of running generative models, the boundary between helpful utility and intrusive corporate messaging has become increasingly blurred, leading to a significant reevaluation of the digital workspace.

Shifting Paradigms in AI-Assisted Development and Market Evolution

Emergent Trends in Automated Coding and Productivity Enhancements

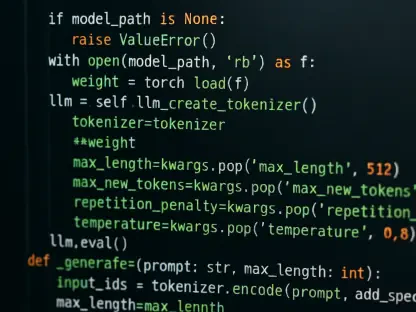

The evolution of coding assistants has shifted toward contextual AI agents capable of suggesting specific workflow tools rather than just predicting the next line of code. Developers now expect these agents to handle complex tasks like generating pull request descriptions or updating documentation based on intent. This has birthed a prosumer market where users demand high-utility features that feel like a natural extension of their own logic rather than a secondary corporate service vying for attention.

Projecting the Economic Impact and Growth of AI Integration Tools

Market data suggests a robust adoption rate for integrated AI tools, with growth projections for the productivity software market showing a steady climb from 2026 through 2030. These tools have become critical performance indicators for companies aiming to reduce time-to-market for new software products. As AI continues to optimize the development lifecycle, the economic value generated by these efficiencies is expected to reach trillions of dollars, though this growth depends heavily on maintaining a stable and respectful user environment.

Navigating the Friction Between Corporate Monetization and Developer Autonomy

The industry recently faced a major hurdle when GitHub Copilot began inserting promotional tips into pull requests, such as unsolicited recommendations for the productivity app Raycast. This incident, sparked by the AI modifying human-authored text to include third-party links, was met with immediate hostility from the developer community. Users like Zach Manson pointed out that an AI editing personal repository descriptions without clear consent felt less like a feature and more like a breach of the sanctity of the code.

Technological challenges persist in ensuring that collaborative platforms do not overstep their bounds by harvesting data or injecting ads into secure environments. This backlash forced a retraction of the promotional feature, as developers viewed it as a vector for intrusive advertising. Companies now face the difficult task of fixing programming logic issues that lead to unauthorized repository modifications while trying to find sustainable revenue streams that do not alienate their primary user base.

Ethical Standards and the Regulatory Landscape for Professional Workspaces

The sanctity of the repository has emerged as a key ethical battleground, with experts calling for stricter boundaries regarding AI-generated modifications. There is a growing consensus that any automated edit, especially those involving external links or promotional content, must require explicit user consent and provide full transparency. Compliance considerations are also becoming more complex as corporate environments demand that AI integrations adhere to strict security protocols to prevent the accidental introduction of unwanted marketing material.

Developer advocacy groups are playing a more prominent role in shaping the internal policies of major hosting services. They argue that the future of professional development platforms relies on a foundation of mutual respect, where the AI serves the human creator rather than the interests of an advertising partner. Establishing these standards is no longer just a matter of preference but a regulatory necessity as software becomes the backbone of global infrastructure.

Defining the Future Boundaries of AI Integration in Collaborative Environments

Future platforms will likely move toward a more sophisticated, opt-in model for AI assistants that respect user-defined constraints and professional boundaries. We are seeing a rise in potential disruptors that prioritize privacy and clean code over feature bloat, offering an alternative to users tired of corporate intrusion. These privacy-first tools aim to provide the same productivity boosts without the risk of unauthorized content modification or data exploitation.

Global economic conditions may continue to pressure major platforms to innovate their revenue models, but the most successful services will be those that find a way to monetize without disrupting the user experience. The transition toward a more nuanced AI integration will involve tools that understand the difference between a helpful suggestion and an intrusive advertisement. This evolution will define which platforms maintain their dominance and which ones lose their community to more ethical competitors.

Evaluating the Long-Term Implications for Developer Trust and AI Deployment

The fallout from the Copilot advertising incident resulted in a swift retraction and an admission that modifying human-written text was a tactical error. This situation proved that maintaining the sanctity of the digital workspace remained a non-negotiable requirement for professional developers. Platforms that ignored these boundaries found that even minor promotional experiments could lead to widespread reputational damage.

Software providers learned that implementing AI features required a user-centric approach that focused on enhancing the creative process rather than exploiting it for short-term gain. The industry began moving toward a model where transparency and autonomy were the primary metrics of success. This shift ensured that the trajectory of AI applications remained focused on solving technical problems, ultimately reinforcing the trust needed for long-term innovation in the software ecosystem.