The digital security landscape shifted unexpectedly when a major operational oversight resulted in the public exposure of the entire source code repository for Claude Code, a premier AI-driven development tool. This incident originated within the npm package registry, a cornerstone of modern software distribution, where a newly published version of the tool inadvertently included a source map file that served as a direct roadmap to the company’s internal storage. Security researcher Chaofan Shou first identified this vulnerability, noting that the included metadata pointed to an unprotected archive hosted on the Cloudflare R2 storage platform. This configuration allowed any individual with the correct URL to download a comprehensive zip file containing the core logic of the application. While the tech industry frequently discusses high-level cyberattacks, this event highlighted how a simple misconfiguration in the deployment pipeline could bypass sophisticated security perimeters.

Technical Anatomy of the Source Leak

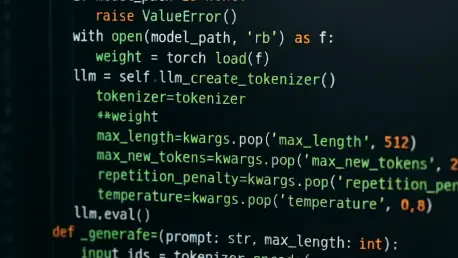

The technical specifics of the leak revealed a significant lapse in the automated build process used by the development team. Source map files are typically utilized during the development phase to assist engineers in debugging obfuscated or minified code by mapping it back to its original TypeScript source. In this instance, the map file was not stripped before the package was pushed to the public registry, effectively acting as a digital skeleton key. Upon extraction, the archive yielded approximately 1,900 individual TypeScript files and over 512,000 lines of proprietary code. This massive dataset included internal libraries, specific implementations for slash commands, and specialized built-in tools that define the unique functionality of the platform. The exposure of such a large volume of unobfuscated code provided an unprecedented look into the internal architecture of a tool that was meant to remain strictly proprietary and shielded from competitors.

Building on the initial discovery, the speed at which the information disseminated across the internet demonstrated the near-impossible task of containing data once it enters the public domain. Within a remarkably short timeframe, the source code was mirrored on multiple platforms, with GitHub becoming a primary hub for decentralized storage. Despite attempts to mitigate the damage, the code was forked more than 41,500 times, ensuring its persistence across the global developer community. Although the original uploader eventually removed the primary archive to avoid potential legal repercussions, the sheer number of independent copies meant that the intellectual property was effectively permanently archived. This rapid proliferation underscored the inherent risks of automated package distribution, where a single human error during a release cycle can result in the instantaneous loss of control over years of research and development effort.

Institutional Response and Security Hygiene

In the wake of the incident, official statements attributed the leak to a packaging oversight rather than a malicious breach of the core infrastructure. The organization emphasized that while their proprietary algorithms and internal tools were exposed, no sensitive customer data or internal credentials were included in the leaked files. This distinction served to reassure the user base regarding the safety of their private information, even as the company’s intellectual property was scrutinized by security enthusiasts and industry rivals alike. The event prompted an internal audit of the release management protocols to identify exactly where the failure occurred within the continuous integration and deployment pipelines. Experts noted that such failures often stem from a lack of strict filtering in configuration files like the npm ignore list or the files field within a package configuration, which are critical for preventing the inclusion of sensitive files.

The fallout from this event provided critical lessons for the broader technology sector regarding the management of automated build systems and the risks of developer-centric tools. Organizations prioritized the implementation of automated scanning tools that specifically searched for map files and sensitive metadata before any package reached a production-level registry. Engineering teams adopted more rigorous verification steps within their deployment pipelines, ensuring that all artifacts were strictly audited by automated scripts designed to flag unintended file inclusions. Furthermore, the industry shifted toward a philosophy where debugging symbols and source maps were hosted in private, authenticated environments rather than being bundled with public distributions. By treating the deployment configuration as a high-stakes security component rather than a routine administrative task, firms successfully reduced the likelihood of similar exposures. These proactive measures established a new standard for registry hygiene and protected proprietary assets.