The rhythmic clicking of mechanical rotors once echoed through the halls of German military outposts, carrying with it a misplaced sense of absolute invulnerability. In 1918, Arthur Scherbius patented a device he believed would provide absolute commercial secrecy, unaware that his invention would eventually become the centerpiece of the greatest intelligence failure in military history. The Enigma machine, with its quadrillions of possible settings, was mathematically formidable for its time, yet it was dismantled not just by superior mathematics, but by the very human flaws of its creators and operators. Today, as we transition from mechanical rotors to quantum-resistant algorithms, the ghost of Enigma serves as a stark reminder: technical complexity is never a substitute for comprehensive security.

This historical episode offers more than just a lesson in cryptanalysis; it provides a foundational framework for understanding risk in a hyper-connected world. While the machine itself was a marvel of engineering, its failure proved that a secure system is only as strong as the ecosystem surrounding it. Modern defenders often fall into the same trap as the Enigma’s designers, focusing on the strength of the lock while leaving the key under a doormat.

From Mechanical Ciphers to Digital Defense

The transition of Enigma from a commercial tool to a weaponized instrument of the Nazi regime highlights the historical roots of modern supply chain security and nationalized technology. When the German military adopted the device, they introduced modifications that increased its complexity, assuming that physical possession of the machine was the only way to compromise it. With only 350 of the original 40,000 machines surviving today, the physical scarcity of these devices mirrors the current fragility of our global hardware supply chains.

Understanding the Enigma is not merely a lesson in history; it is an examination of how perceived technological superiority can create a dangerous blind spot for organizations. This scarcity reminds us that hardware integrity is a finite resource that requires constant vigilance. Organizations that rely on proprietary or closed-source hardware often repeat the mistakes of the past by assuming that obscurity equals security, making it a critical case study for any professional tasked with defending modern infrastructure.

The Architectural Flaws and the Fallacy of Invincibility

The Technical Hubris of Mathematical Complexity

The German military relied on the sheer number of possible permutations—158 quintillion—believing that the time required to brute-force the code rendered it impenetrable. This reliance on “mathematical theater” ignored the possibility of cognitive leaps by adversaries, such as the Polish cryptographers who first broke the code in 1932 by applying Group Theory to the machine’s rotor movements. They did not attack the math directly through exhaustion; they attacked the logical structure of the implementation.

The Human Element as the Ultimate Vulnerability

The most sophisticated encryption in the world can be undone by a bored or distracted operator. Historical records show that cryptographers at Bletchley Park exploited “cillis”—predictable rotor settings chosen by lazy operators, such as their girlfriends’ initials or simple keyboard patterns. This mirrors modern-day password fatigue and the persistent danger of weak credentials in high-security environments. No matter how many rotors or bits are added to a system, the person behind the keyboard remains the most significant variable in the security equation.

The Absence of Red Teaming and Adversarial Thinking

A primary reason for the Enigma’s downfall was a catastrophic “failure of imagination.” The Nazi regime failed to rigorously test their own system against potential compromises because they fundamentally underestimated their opponents. In modern terms, they lacked a red teaming culture, choosing to believe in their own marketing rather than proactively seeking out the “known unknowns” within their protocols. They operated under the assumption that if they could not break it, no one else could, a bias that still plagues many executive boards today.

Expert Perspectives on Historical Cyber Intelligence

Marc Sachs, a senior vice president at the Center for Internet Security, notes that the Enigma story is a cautionary tale about the “illusion of security.” Sachs argues that the destruction of thousands of machines by retreating German forces was a late-stage admission of vulnerability that came far too late to change the war’s outcome. This desperate attempt to wipe the slate clean highlights a reactive mindset that is still common in incident response today, where organizations focus on damage control rather than structural prevention.

Experts at Bletchley Park, including Alan Turing, demonstrated that breaking a system often requires looking beyond the code to the physical and behavioral patterns of the users. They pioneered a philosophy that remains the cornerstone of modern threat hunting and behavioral analytics. By identifying the “signatures” of specific German operators, the Allies were able to predict future moves, proving that metadata and user behavior can be just as revealing as the decrypted content itself.

Strategies for Integrating Enigma Lessons into Modern Security

Implementing Rigorous Adversarial Testing

Professionals should adopt a “never trust, always verify” mindset by employing red teams to poke holes in established protocols. Just as the Germans should have questioned the Enigma’s reflector mechanism—which prevented a letter from ever being encrypted as itself—modern teams must identify and eliminate “impossible” states in their own logic. Eliminating these systemic biases prevents attackers from using logical shortcuts to bypass complex encryption layers.

Addressing the Behavioral Gap in Security Protocols

Security is only as strong as its least disciplined user. Organizations must move beyond theoretical security and design systems that account for human nature. This includes automating high-risk configurations to prevent the digital equivalent of “cillis” and ensuring that security measures do not incentivize “lazy” workarounds by employees. Creating a culture where security is usable ensures that protocols are followed rather than circumvented for the sake of convenience.

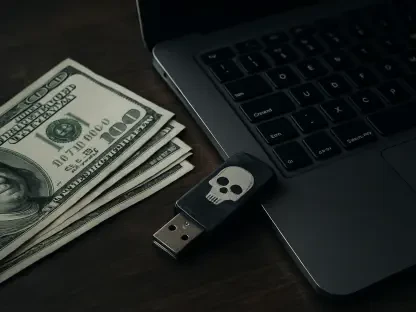

Prioritizing Supply Chain Integrity and Hardware Security

The history of the Enigma illustrates the importance of controlling the lifecycle of security hardware. Modern professionals should apply these lessons by verifying the integrity of their components and maintaining a clear understanding of the physical and digital provenance of their encryption tools. As we look toward the next decade of defense, the focus shifted toward building resilient systems that assume the adversary already has the machine, necessitating a strategy that relies on zero-trust architectures and continuous verification rather than the fleeting hope of absolute secrecy.