Rupert Marais is a veteran in the cybersecurity trenches, known for his deep dives into endpoint defense and the architectural nuances of complex cloud environments. With an extensive background in network management and defensive strategy, he has spent years helping organizations navigate the shifting sands of cloud-native threats. In this conversation, he unpacks the mechanics of a high-profile breach where a trusted vulnerability scanner became the Trojan horse for a massive data exfiltration. We explore the ripple effects of compromised API keys, the nightmare of auditing unstructured data, and the critical separation between public-facing cloud services and internal networks.

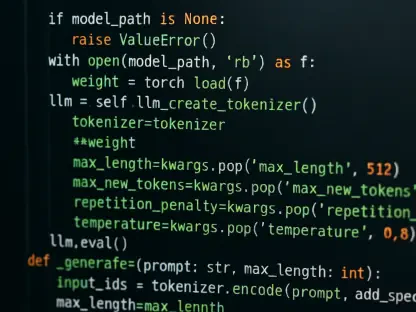

When a supply chain attack involving a tool like Trivy compromises an API key, how do initial reconnaissance efforts typically unfold? Could you walk us through the technical steps an actor takes to pivot from a single stolen credential to launching automated secret scanners like TruffleHog?

The moment a supply chain attack subverts a trusted tool like the Trivy scanner, the traditional perimeter essentially dissolves because the threat is already inside the house. In the case of the March 19 compromise, the threat actors didn’t just stumble into the system; they exploited a compromised version of the software received through legitimate update channels. Once they possessed that initial AWS API key, their first move was to establish a more permanent foothold by creating and attaching a new access key to a user account. This wasn’t just a random probe; it was a calculated pivot to gain control over dozens of other AWS accounts affiliated with the European Commission. By the time they launched TruffleHog, they were essentially using a high-powered spotlight to find every other “key to the kingdom” by systematically calling the Security Token Service to validate what other doors they could unlock.

The exfiltration of 340GB of data, including automated email notifications and bounce-back messages, presents unique privacy risks. What are the specific challenges in scrubbing personal information from such unstructured datasets, and what metrics should organizations use to determine the severity of this type of exposure?

Scrubbing 340GB of uncompressed data is a logistical nightmare because you aren’t just dealing with neat, searchable database rows, but rather a chaotic sprawl of 51,992 individual files. About 2.22GB of that data consisted of automated notifications and bounce-back messages, which are notoriously difficult to audit because they contain raw, user-submitted content that bypasses standard data categorization. When you have over 51,000 files full of names, email addresses, and usernames, the severity isn’t just about the volume, but the “contextual sensitivity” of what those users were submitting to the Europa websites. Organizations must look at the “unique identity count” and the potential for these leaked credentials to be used in secondary phishing campaigns against the 71 different entities affected. It is a slow, painstaking process that requires a massive amount of time to ensure that every intricate piece of personal information is accounted for before notifying the data protection bodies.

Given that a single compromised account can grant control over dozens of affiliated cloud environments, how should architecture be redesigned to prevent lateral movement? What step-by-step protocols are necessary to ensure that rotating credentials and revoking rights actually flushes a sophisticated actor out of the system?

The fact that one backend account for the Europa web hosting service could compromise 42 internal clients and 29 other Union entities highlights a dangerous lack of isolation. To prevent this, architects must move toward a “cell-based” or micro-segmentation strategy where a compromise in one cloud account cannot cascade into seventy others. When the breach was confirmed on March 27, the immediate response of deactivating and rotating credentials was necessary, but it’s often just a temporary fix if the actor has already embedded “backdoor” keys. A true flush requires a complete audit of the Identity and Access Management roles to ensure no new, unauthorized users were created during the window of compromise. You have to treat the entire environment as toxic, systematically revoking all active sessions and re-verifying the integrity of every single API key across the entire affiliated network.

In scenarios where public-facing web hosting services are breached, how can internal systems be effectively isolated from the cloud backend? What anecdotes from your experience illustrate the difficulty of validating that internal networks remain untouched while external databases are being actively exfiltrated?

Effective isolation relies on a strict “digital air gap” between the public cloud backend and the sensitive internal core of an organization. While the Commission confirmed that their internal systems were not affected, validating that claim during a 300GB exfiltration event is incredibly stressful for any security team. I’ve seen situations where a secondary, forgotten management tunnel or a shared administrative login becomes the bridge that lets an attacker jump from a public website’s database straight into the internal HR or financial servers. You have to monitor the “east-west” traffic with almost sensory precision, looking for even the slightest heartbeat of data moving where it shouldn’t. If your cloud-based web hosting is being picked clean by an extortion group like ShinyHunters, your only peace of mind comes from knowing that those two worlds share absolutely no underlying infrastructure or credentials.

What is your forecast for the security of open-source vulnerability scanners and the future of supply chain integrity?

I expect we are entering a period where the very tools we rely on for protection, like Trivy, will become the primary targets for sophisticated groups looking for a high-value entry point. We will likely see a mandatory shift toward cryptographically signed software builds and a “zero-trust” approach to the DevOps pipeline where no update is trusted until its hash is independently verified. The industry will have to start “auditing the auditors,” meaning we will spend as much time securing our security scanners as we do the applications they are meant to protect. If we don’t fix the integrity of the software supply chain, these automated tools will simply become the most efficient way for hackers to harvest credentials at scale across thousands of organizations simultaneously.